Only after combing through YouTube tutorials I understood that it is very important to correctly set up the mesh. When I uploaded an existing 3DPG face to Blender, I struggled to get the mouth positions to function. And you can come back to this Blender animation setup time and time again. It is hard to set it up properly, but when it is done, your mesh will speak any speech script you upload. Alternatively, you can download the automatic lip-syncing plugin “Rhubarb”. I would only use this method for a short speech animation, like greetings, etc. This is a precise but very time consuming process. Then start saving keyframes for every sound effect to create your desired speech (viewing a YouTube tutorial is highly recommended).

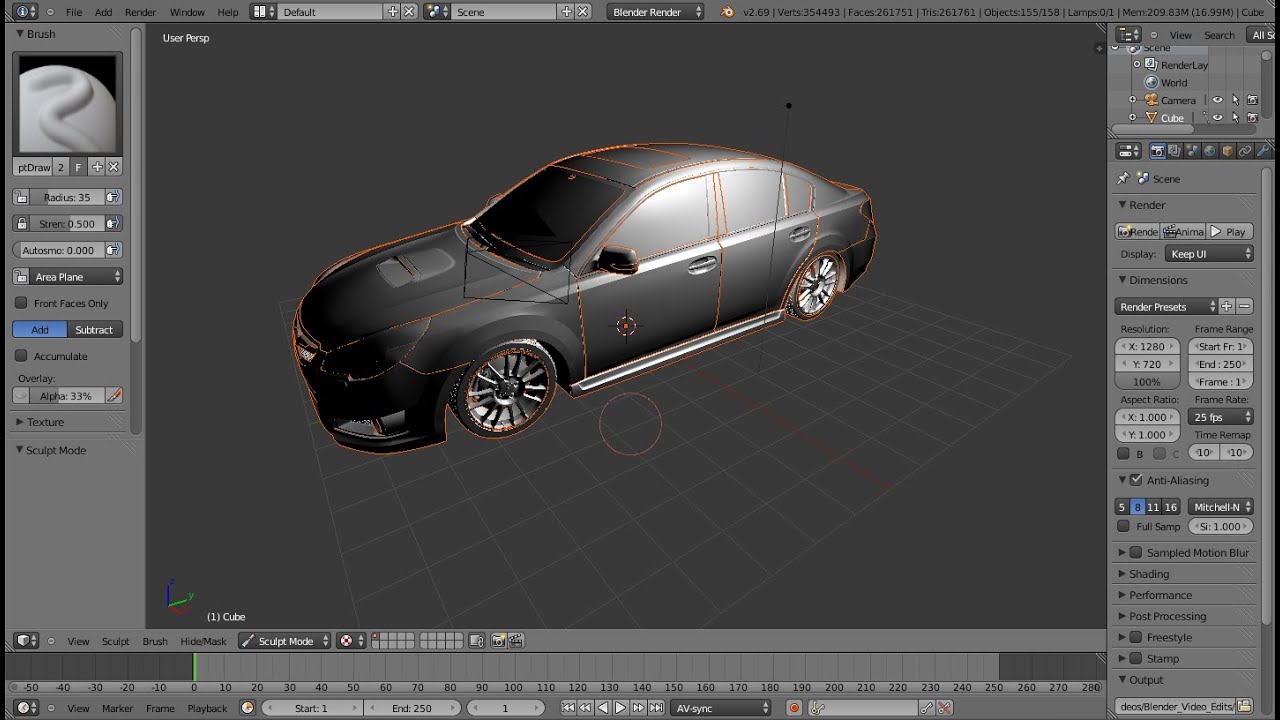

It is also possible to lip sync manually by simply adding your model’s mouth positions to “Object Mode – Object Data – Shape Keys”. (Image: YOGYOG) Automatic Lip Syncing – Rhubarb Plugin However, for a 3D model face, for example, we would recommend using all positions described in the YouTube video by YOGYOG. For a simple 2D animation, four mouth positions may be sufficient. There are dozens of different mouth positions, each covering a particular sound: E, A, O, W, etc. Lip syncing seems to be one of the most difficult tasks in character/object animation. Automatic Lip Syncing – 3DPG Mouth Positions – Shapekeys There may be tools out there better suited for particular tasks, but I find it very convenient to have it all, designing, sculpting, etc., in a software that I am familiar with.

Additionally, you can change the colors of the objects in various transitions. My most recent finds are animation rigs and armatures, tools that allow to create movement for any object you want, in a smooth and natural fashion. The more I use it, the more functionalities I discover.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed